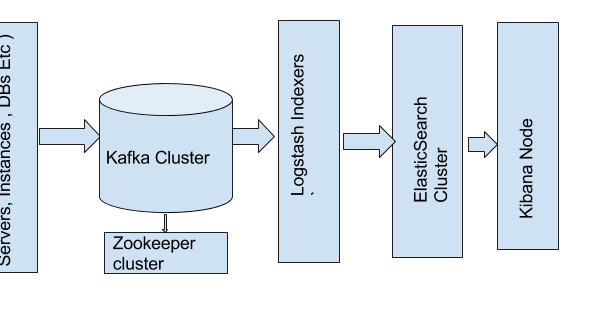

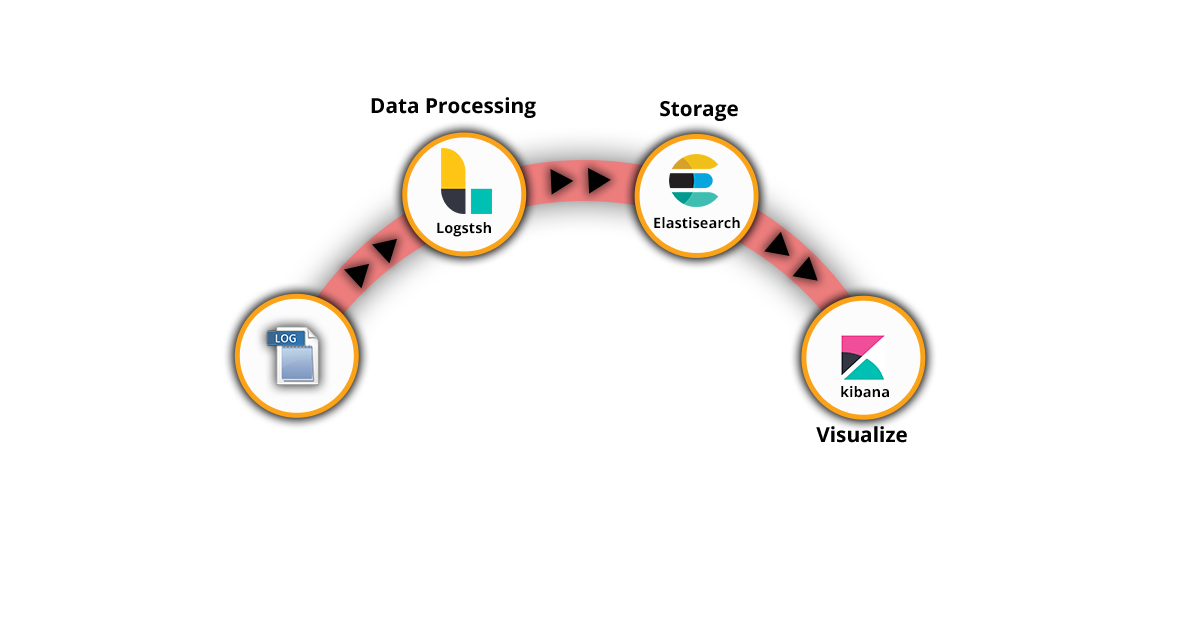

Logstash is a powerful tool to have on your side for this key task. Without correct parsing, your data will be meaningless as you attempt to analyze it in Kibana. One cannot over-exaggerate the importance of this step. This means breaking down the different log messages into meaningful field names, mapping the field types correctly in Elasticsearch, and enriching specific fields where necessary. To be successful in the next step - that of searching the data and analyzing it - the data needs to be normalized. Another crucial task, and one extremely important in the context of SIEM, as well, is that of processing and parsing the data.Īll those data source types outlined above generate data in different formats. Log ProcessingĬollecting data and forwarding it is of course just one part of the job Logstash does in a logging pipeline. An organization looking into using ELK for SIEM must understand that additional components will need to be deployed to augment the stack. The ELK Stack alone, therefore, will most likely not be enough as your business, and the data it generates grows. Kafka is often the tool used in this context, installed before Logstash (other tools, such as Redis and RabbitMQ, are also used). Not only that, a queuing mechanism will need to be deployed to make sure data bursts are handled and disconnects between the various components in the pipeline do not result in data loss. Logstash can then be used to aggregate the data from the beats, process it (see below) and forward it to the next component in the pipeline.īecause of the amount of data involved and the different data sources being tapped into, multiple Logstash instances will most likely be required to ensure a more resilient data pipeline. Beats are lightweight log forwarders that can be used as agents on edge hosts to track and forward different types of data, the most common beat being Filebeat for forwarding log files. Using a combination of Beats and Logstash, you can build a logging architecture consisting of multiple data pipelines. This requires aggregation capabilities which the ELK Stack is well-suited to handle. routers, DNS) and external security databases (e.g. firewalls, VPN), network infrastructure (e.g. servers, databases), security controls (e.g. These data sources will vary depending on your environment, but most likely you will be pulling data from your application, the infrastructure level (e.g. Log CollectionĪs mentioned above, SIEM systems involve aggregating data from multiple data sources. This article will try and dive deeper into the question of whether the ELK Stack can be used for SIEM, what is missing, and what is required to augment it into a fully-functional SIEM solution. But when we defined what a SIEM system actually is, a long list of components was listed in addition to log management. If log management and log analysis were the only components in SIEM, the ELK Stack could be considered a valid open source solution. Taking care of the collection, parsing, storage, and analysis, ELK is part of the architecture for OSSEC Wazuh, SIEMonster, and Apache Metron. It is no coincidence, therefore, that the ELK Stack - today, the world’s most popular open-source log analysis and management platform - is part and parcel of most of the open-source SIEM solutions available. These steps, usually grouped together under the term “log management,” are a must-have component in any SIEM system.

The data needs to be collected, processed, normalized, enhanced and stored. Whether from servers, firewalls, databases, or network routers - logs provide analysts with the raw material for gaining insight into events taking place in an IT environment.īefore this material can be turned into a resource, however, several crucial steps need to be taken. At the heart of any SIEM system is log data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed